Surveillance, AI & ML readings

Articles & news

Meta boss praises new US army division enlisting tech execs as lieutenant colonels

Longtime Zuckerberg lieutenant Andrew Bosworth calls donning fatigues with Palantir and OpenAI brass ‘great honor’

Andrew Bosworth, a long-term lieutenant to Mark Zuckerberg known widely as “Boz”, is one of several senior Silicon Valley executives commissioned to the rank of lieutenant colonel in the corps, called Detachment 201, which the US army says will “fuse cutting-edge tech expertise with military innovation”.

Article on theguardian.com

Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity

We conduct a randomized controlled trial (RCT) to understand how early-2025 AI tools affect the productivity of experienced open-source developers working on their own repositories. Surprisingly, we find that when developers use AI tools, they take 19% longer than without—AI makes them slower. We view this result as a snapshot of early-2025 AI capabilities in one relevant setting; as these systems continue to rapidly evolve, we plan on continuing to use this methodology to help estimate AI acceleration from AI R&D automation.

The Pentagon says AI is speeding up its ‘kill chain’

Leading AI developers, such as OpenAI and Anthropic, are threading a delicate needle to sell software to the United States military: make the Pentagon more efficient, without letting their AI kill people. “We obviously are increasing the ways in which we can speed up the execution of kill chain so that our commanders can respond in the right time to protect our forces,”

The “kill chain” refers to the military’s process of identifying, tracking, and eliminating threats, involving a complex system of sensors, platforms, and weapons. Generative AI is proving helpful during the planning and strategizing phases of the kill chain...

📖 Original article on techcrunch.com

📖 OpenAI changes policy to allow military applications on techcrunch.com

📖 Google removes pledge to not use AI for weapons on techcrunch.com

📖 Meta says it’s making its Llama models available for US national security applications on techcrunch.com

📖 Anthropic teams up with Palantir and AWS to sell AI to defense customers on techcrunch.com

📖 Amazon doubles down on Anthropic, completing its planned $4B investment on techcrunch.com

Why AI Usage May Degrade Human Cognition And Blunt Critical Thinking Skills

Either meaning Artificial Inference or Artificial Intelligence depending on who you ask, AI has seen itself used mostly as a way to ‘assist’ people. Whether in the form of a chat client to answer casual questions, or to generate articles, images and code, its proponents claim that it’ll make workers more efficient and remove tedium.

In a recent paper published by researchers at Microsoft and Carnegie Mellon University (CMU) the findings from a survey are however that the effect is mostly negative.Does so-called generative AI (GAI) turn workers into monkeys who mindlessly regurgitate whatever falls out of the Magic Machine, or is there true potential for removing tedium and increasing productivity?

📖 Article on hackaday.com

📖 Original paper on microsoft.com

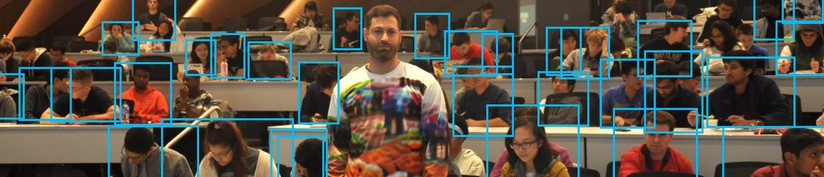

Excavating AI: The Politics of Images in Machine Learning Training Sets

...In this essay, we will explore why the automated interpretation of images is an inherently social and political project, rather than a purely technical one. Understanding the politics within AI systems matters more than ever, as they are quickly moving into the architecture of social institutions: deciding whom to interview for a job, which students are paying attention in class, which suspects to arrest, and much else...

...Datasets aren’t simply raw materials to feed algorithms, but are political interventions. As such, much of the discussion around “bias” in AI systems misses the mark: there is no “neutral,” “natural,” or “apolitical” vantage point that training data can be built upon. There is no easy technical “fix” by shifting demographics, deleting offensive terms, or seeking equal representation by skin tone. The whole endeavor of collecting images, categorizing them, and labeling them is itself a form of politics, filled with questions about who gets to decide what images mean and what kinds of social and political work those representations perform...

📖 Essay By Kate Crawford and Trevor Paglen

‘The Gospel’: how Israel uses AI to select bombing targets in Gaza

There has, however, been relatively little attention paid to the methods used by the Israel Defense Forces (IDF) to select targets in Gaza, and to the role artificial intelligence has played in their bombing campaign.

...in particular, to deploy an AI target-creation platform called “the Gospel”, which has significantly accelerated a lethal production line of targets that officials have compared to a “factory”.

📖 Original article on theguardian.com

📖 Spanish version of the article on eldiario.es

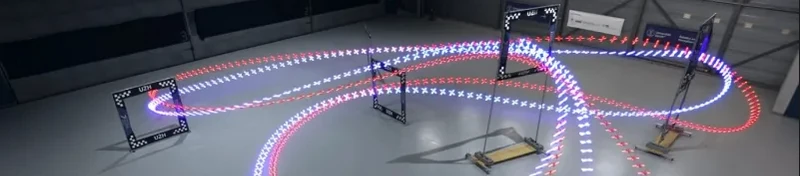

Autonomous Racing Drones Are Starting To Beat Human Pilots

Even with all the technological advancements in recent years, autonomous systems have never been able to keep up with top-level human racing drone pilots. However, it looks like that gap has been closed with Swift – an autonomous system developed by the University of Zurich’s Robotics and Perception Group.

📖 Original article on Natyre magazine and video on youtube.com

📖 Article on hackaday.com

Documents Reveal Advanced AI Tools Google Is Selling to Israel

Training materials reviewed by The Intercept confirm that Google is offering advanced artificial intelligence and machine-learning capabilities to the Israeli government through its controversial “Project Nimbus” contract. The Israeli Finance Ministry announced the contract in April 2021 for a $1.2 billion cloud computing system jointly built by Google and Amazon. “The project is intended to provide the government, the defense establishment and others with an all-encompassing cloud solution,” the ministry said in its announcement.

Google, like Microsoft, has its own public list of “AI principles,” a document the company says is an “ethical charter that guides the development and use of artificial intelligence in our research and products.” Among these purported principles is a commitment to not “deploy AI … that cause or are likely to cause overall harm,” including weapons, surveillance, or any application “whose purpose contravenes widely accepted principles of international law and human rights.”

📖 Article on theintercept.com.

📖 Project Nimbus page on wikipedia.

📖 Article on Time magazine about Google employees protesting on Nimbus project contract. More info on fired or arrested employees on nbcnews.com and theverge.com.

Making an Invisibility Cloak: Real World Adversarial Attacks on Object Detectors

This paper studies the art and science of creating adversarial attacks on object detectors. Most work on real-world adversarial attacks has focused on classifiers, which assign a holistic label to an entire image, rather than detectors which localize objects within an image. Detectors work by considering thousands of “priors” (potential bounding boxes) within the image with different locations, sizes, and aspect ratios. To fool an object detector, an adversarial example must fool every prior in the image, which is much more difficult than fooling the single output of a classifier.

Who are we talking to when we talk to these bots?

...digging deeper, one encounters some nagging follow-up questions about ChatGPT’s account of itself. A language model is a very specific thing: a probability distribution over sequences of words. So we’re talking to a probability distribution? That’s like saying we’re talking to a parabola. Who is actually on the other side of the chat?...

Interesting article by Colin Fraser on medium.com

Books

📚 Atlas of AI

Power, Politics, and the Planetary Costs of Artificial Intelligence

Kate Crawford, 2021

The hidden costs of artificial intelligence, from natural resources and labor to privacy and freedom What happens when artificial intelligence saturates political life and depletes the planet?

How is AI shaping our understanding of ourselves and our societies? In this book Kate Crawford reveals how this planetary network is fueling a shift toward undemocratic governance and increased inequality.

📚 The Age of Surveillance Capitalism

The Fight for a Human Future at the New Frontier of Power

Shoshana Zuboff, 2018

"Shoshana Zuboff, named "the true prophet of the information age" by the Financial Times, has always been ahead of her time. Her seminal book In the Age of the Smart Machine foresaw the consequences of a then-unfolding era of computer technology. Now, three decades later she asks why the once-celebrated miracle of digital is turning into a nightmare. Zuboff tackles the social, political, business, personal, and technological meaning of "surveillance capitalism" as an unprecedented new market form...

📚 Infocracy

Digitization and the Crisis of Democracy

Byung-Chul Han, 2022

An astute analysis of the information regime, the new government to which we are subjected, by the most widely read philosopher of the 21st century. Digitalisation advances inexorably. Stunned by the frenzy of communication and information, we feel powerless in the face of the tsunami of data that deploys destructive and deforming forces. Today, digitisation also affects the political sphere and causes serious disruption to the democratic process. Election campaigns are information wars waged with every conceivable technical and psychological means.